Allow me to preface this by saying that I’m not on the “anti-A.I.” bandwagon by any stretch. While I’m sceptical, to a reasonable degree, about some of today’s large-language models, and whether they can really do as much as investors have been promised, I see the potential in A.I. in a lot of ways. I don’t want this piece – discussing one very specific use of A.I. – to be misunderstood! In fact, I’d argue that anyone who claims to be “anti-A.I.” in every possible case doesn’t actually understand what A.I. is and how broad a category it is; it would be like saying your “anti-computer,” or “anti-electricity.” The uses for A.I. are vast – it’s an incredibly big category of inventions.

So what are we getting into today, then? If you missed the announcement, graphics card manufacturer (and major supplier of components to A.I. datacentres) Nvidia has recently shown off its new A.I.-powered DLSS 5.0 – a graphical overlay for some video games, which is intended to add more “realism” to environments, character models, and faces.

And… to be blunt, I think it looks like shit.

Some of today’s generative A.I. models can create photorealistic landscapes, creatures, and even people. A.I. art is a big topic in and of itself, and it can be quite controversial, so we won’t get into all of the arguments around it. Suffice to say that, as someone who runs a small website as a hobby, the only times I’ve used A.I. art (that I’m aware of, anyway) are in a couple of my other articles discussing A.I. – and that was a deliberate choice to help illustrate a point I was making. I’m not actively opposed to A.I. art in all cases; as with any subject, it’s not a black-or-white thing. Not all photographs are “art,” but some can be – and I would suggest A.I. art is probably in that same kind of space.

But we’re off-topic already!

DLSS 5, according to Nvidia, is intended to increase the “visual fidelity” of video games, and the company claims it’s their most significant innovation since real-time ray-tracing almost nine years ago. DLSS 5 uses generative A.I. in some form – the exact details are not clear – and seems to work as a kind of “middle man” during the rendering of frames, upscaling, adding detail, and trying to give games a more photorealistic look.

On the surface, this sounds like a useful invention, right? At least for *some* games, anyway. Game developers have been chasing photorealism since, really, the very dawn of video games and computer-generated imagery, so any new innovation that brings us closer to that goal should be a cause for celebration. Only… well, is DLSS 5 *actually* making things photorealistic? Or is it simply adding a filter?

The screenshots Nvidia provided – which, I would note, are going to have been *very* carefully selected to show the new tech in the best possible light – all feel, well, kinda samey. And that’s despite the games selected to show off this new technology all being pretty different from one another in terms of art style. Yes, all of the games in question were aiming for some measure of photorealism, but there are incredibly important differences in the way they use more subtle things like light, shading, facial animations, and so on. If DLSS 5 smooths all of that out, resulting in games that look indistinguishable from one another… I’m not sure I’d call that a “breakthrough.”

To Nvidia’s credit, they claim, in their marketing blurb, that DLSS 5 is meant to be “tightly grounded in the game developer’s 3D world and artistic intent.” But based on the screenshots and video that Nvidia itself provided as part of this announcement, I gotta be honest: I’m not seeing that. I see a filter that smooths out a game’s rough edges, sure, and definitely adds more detail – but if those details are all the same, and the end result is that faces in particular end up looking incredibly similar from title to title (and, I would add, not unlike a Snapchat/TikTok filter or other A.I.-generated artwork), then I don’t think it’s going to be of interest – at least, not to me.

There’s already a lot of sameyness and repetitiveness in the way modern games look thanks to many of the industry’s biggest studios using the same handful of game engines. Unreal Engine 4 and 5 are so commonplace nowadays that you can almost always notice its presence from the moment you boot up a title. And there are advantages to that – don’t get me wrong. As someone who used to work in the industry, one of the biggest issues developers (and studios) faced was that skills in one engine or one programming language don’t automatically translate; if more studios are using the same software, skills are more easily transferrable.

But for players, the end result has been that an increasing number of big-budget titles feel… samey. And DLSS 5, if it can actually do what’s being advertised, might just make that particular trend *worse*, not better. Photorealism is not one singular thing – just go to an art gallery and look at photos, and you can see that, even in the real world, there are completely different ways to capture a portrait, a city, a landscape… and more. DLSS 5 seems, to me, to be trying to apply the same techniques to every game shown off – and the results are more miss than hit.

One of the titles selected was Starfield – and if you know me, you’ll know I’m of the opinion that Starfield needs all the help it can get! I once described Starfield’s NPCs as “dead-eyed, waxy-skinned Madame Tussauds rejects,” so *anything* that could be added to the game to “fix” its NPCs should be great. Right?

Look at the image above, which is taken from the opening act of Starfield. Look at the two characters – Heller and Lin. Doesn’t Heller just look like… a meme? You know, the edited “Chad face” meme? And what’s with the lighting? The image is horrifically over-lit, completely negating the vibe of the original scene. I can’t believe Nvidia has got me *defending* Bethesda’s “artistic vision” for Starfield, but the original version of the scene genuinely has more character. The dimly-lit, dusty space evokes the feeling of being on a small outpost at the arse end of space; the DLSS 5 version completely changes the entire tone of the setting. It makes it feel washed-out.

Even if you prefer the more brightly-lit version of Starfield’s opening area, can we at least agree that Bethesda lit the original room a certain way on purpose? Starfield has other indoor areas which are much brighter, so it’s not a technical limitation. It’s clearly a creative choice for that room, at that mining outpost, to be lit the way it was. And DLSS 5 blasted right through that, ignoring all of it.

The one game where I thought DLSS 5 worked best (or “least badly,” I guess) was EA Sports FC. Those kinds of sports games have always been interested in pushing photorealism, and I just felt that DLSS 5 looked most in line with the game’s art style. But the EA Sports FC promo images also threw up some pretty weird and jarring artefacting in the background: in the image above, note how the player on the left, when DLSS 5 has been enabled, seems to stick out from the background quite abruptly. Compare that to the same image without DLSS 5; it’s a much smoother transition from face to background – something that, I would argue, looks more natural and less artificial.

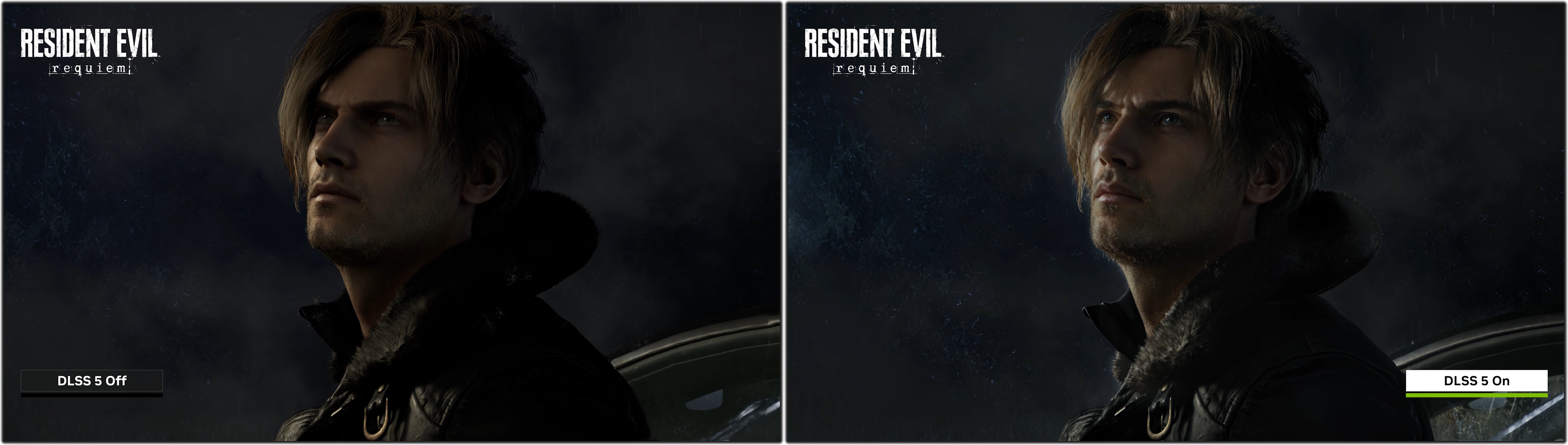

The lighting issue also affects Resident Evil Requiem. The provided images of protagonist Leon (seen below) show DLSS 5 completely changing the way he appears in that scene – and again, as with Starfield above, it looks too bright compared to the original. For a horror game, where environments and lighting matter all the more, I can only describe that as being a potentially huge problem.

The Leon image also has a weird “glassy” effect to the sky in the background, despite seemingly being set outdoors. That could also be a bug – a bug in the very demo images that Nvidia is using to introduce this new technology.

According to reports by folks who’ve seen DLSS 5 for themselves, Nvidia was running the demonstration on not one but *two* of its top-of-the-line RTX 5090 graphics cards. Here in the UK, those cards retail for upwards of £1,800 – so a rig needing two of them is gonna set you back a pretty penny! Cutting-edge innovation often starts expensive and gradually comes down in price – 1080p HD, ray-tracing, 4K, etc. were all in that category. But if DLSS 5 launches, as promised, later this year and it needs the highest of high-end hardware just to get started… well, I guess that rules me out, anyway!

From what I’ve seen, I gotta be honest with you: I’m not impressed. I think there’s potential – in theory – to use A.I. in some way to improve graphical fidelity, add realism, and do the kinds of things that Nvidia is promising DLSS 5 can. But if the end result is games and characters that look like they’re straight out of memes or A.I. art… I don’t see that proving popular and catching on. Even when DLSS 5 had opportunities to genuinely improve some pretty janky-looking character models in a game like Starfield, it still came up short.

Art is complicated, and art is subjective. And I have no doubt that some folks will happily sacrifice “artistic vision” in order to gain a more detailed, photorealistic look. But if there’s one thing we’ve learned from the success of indie games over the past decade-plus, it’s that graphics aren’t the only thing that matters to players. I’m still of the opinion that, if I had to choose between two similar games in the same genre, the better-looking one is going to grab my attention first. And the push for photorealism has led to some absolutely beautiful video games over the past few years. But does adding a generative A.I. layer improve things? Based on the evidence Nvidia chose to submit, I’m gonna say “no.”

However, this could be an idea to keep an eye on. If we haven’t yet reached the ceiling of generative A.I.’s capabilities, and if improvements to this kind of system are possible, it could be an interesting technology for the future. For one thing, it could mean there’s less of a need to remaster and remake older games; if the goal of a remaster, like last year’s Oblivion, for instance, is just to improve the graphical fidelity, well, this kind of system might be able to do that much more easily. So, despite not liking DLSS 5 as it’s been shown today, I can at least see the potential for its use somewhere down the line – assuming that Nvidia can hone it, refine it, and ensure that it really does preserve a game’s unique art style without ruining things like brightness and environmental details, or making faces look… well, like *that*.

Thanks for reading. I’m not a tech expert by any stretch, but I wanted to share my thoughts on this new technology as it pertains to video games. If you want to check out my thoughts on one potential future for generative A.I. in entertainment, click or tap here. And if you want to get my thoughts on last year’s alarming A.I. 2027 paper, you can find that by clicking or tapping here. Until next time!

DLSS and DLSS 5 are trademarks of Nvidia. All titles discussed above are the copyrights of their respective developer, studio, and/or publisher. This article contains the thoughts and opinions of one person only and is not intended to cause any offence.